Depthkit Studio interface

The Depthkit Studio interface follows many of the same conventions as the Depthkit Core Interface. Features unique to Depthkit Studio are listed below.

Context Selection

In addition to the contexts available in the Depthkit Core interface, Studio Calibration & Record is where you can create a calibration and recordings with multiple sensors, also known as Depthkit Studio captures.

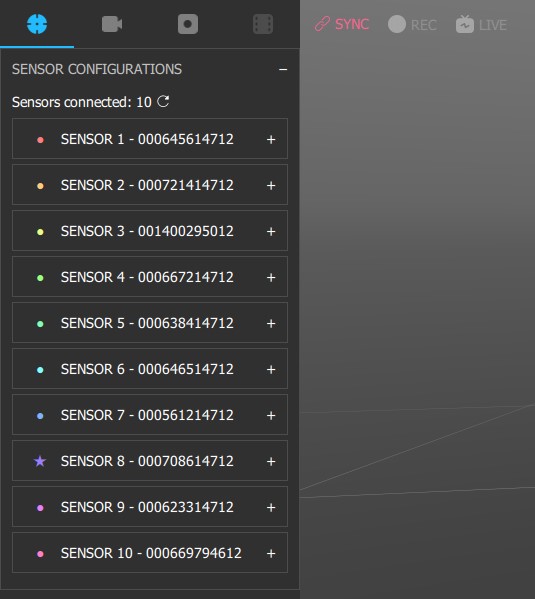

Studio Sensor Configuration Panel

Here, the sensors can be configured just like in the configuring sensor settings section, but with added information for use in Depthkit Studio, including sensor status.

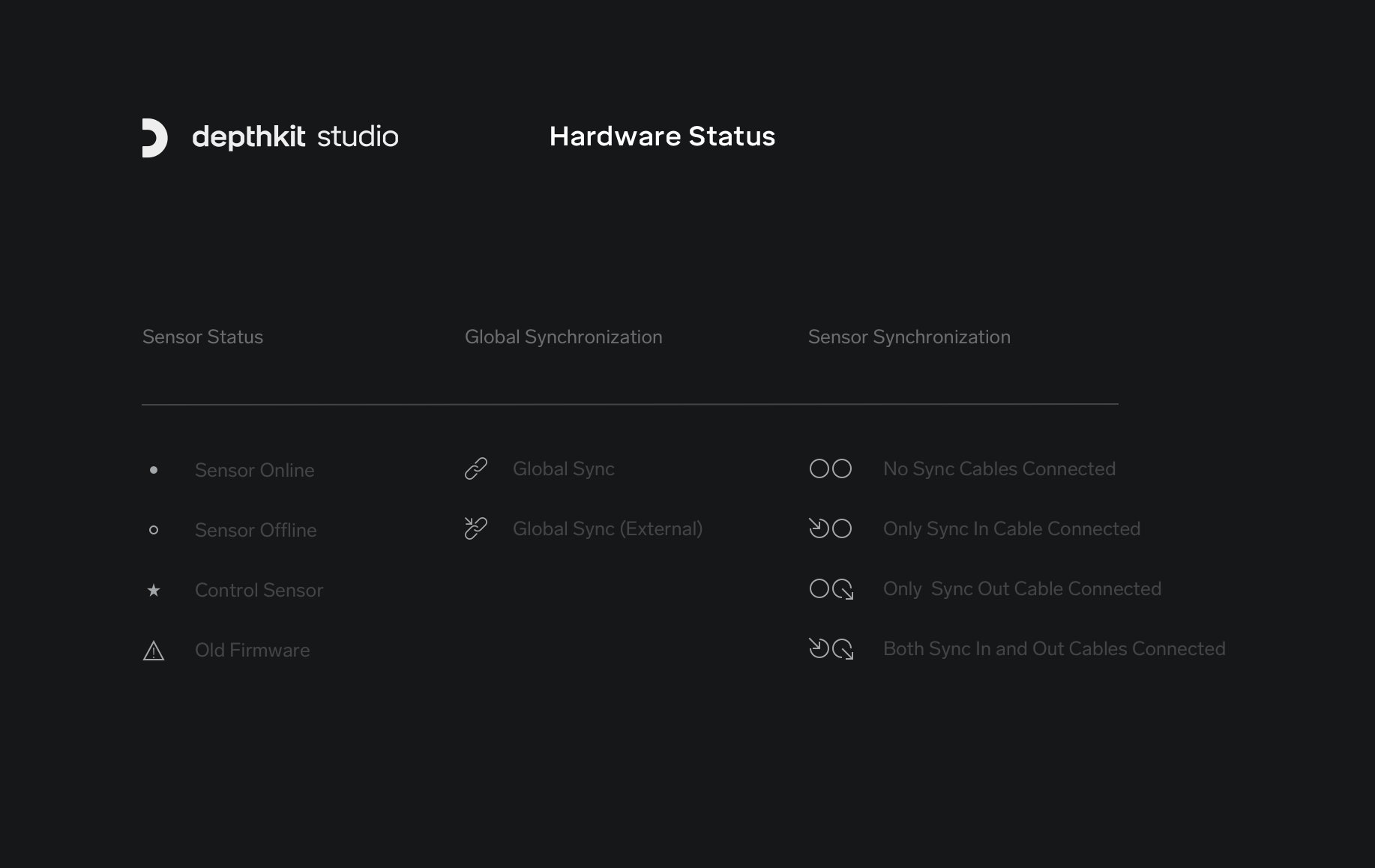

In the Studio context, sensors will appear with a Sync Icon sect to them. See the icon key below and the Sensor sync section for more information.

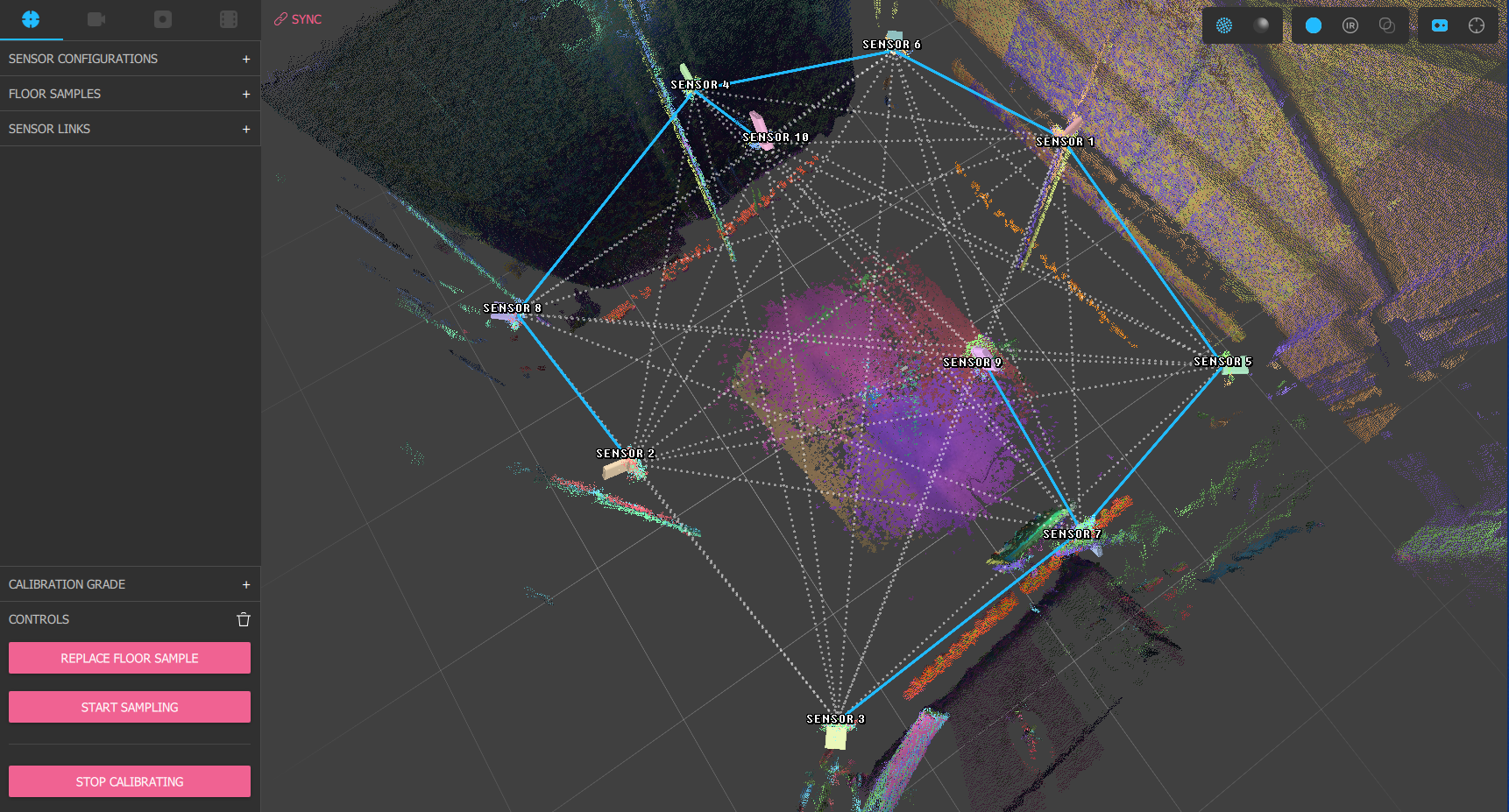

Studio Streaming 3D Viewport

Status Indicators

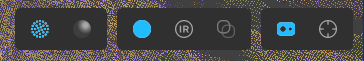

RGB Texturing textures the pointcloud or meshes with color data from the sensor RGB cameras.

Infrared Texturing textures the pointcloud or meshes with luminance data from the sensor infrared cameras.

Edge-detection Diagnostic View (Mesh-Only) highlights edges in the color data from the sensor RGB cameras, enabling instant identification of calibration misalignment or defective sensors.

Toggle Sampled Marker Visualization shows/hides 3D representations of the positions where all samples have been captured in the 3D viewport based on the current calibration.

🚧 Depth Bias applied in RGB Texturing preview

When viewing the 3D viewport with RGB texturing enables, a depth bias is applied. This has to do with how the Femto Bolt and Azure Kinect detect the distance of human skin. This depth bias makes the shape of human faces more natural, but can also make other materials like clothing look slightly "inflated". This bias is disabled when viewing the 3D viewport in IR or Edge-Detection mode, and does not affect recordings.

Icon Key